- Author: Jordon Wade

Summary

The concept of soil health brings soil chemical, physical, and biological traits together under a unifying framework. For more background information on the soil health paradigm, see our Focus Topic here (http://ucanr.edu/sites/Nutrient_Management_Solutions/stateofscience/Soil_Health_894/). To adapt to this new paradigm, new metrics must be developed and refined to better communicate changes in soil health. While we have many robust measurements for soil physical and chemical properties (such as bulk density, pH, and others), we need ways to reliably measure and communicate soil biological processes. However, measuring soil biology can be costly and difficult to translate into management recommendations. Mineralizable carbon (or respiration upon rewetting) has gained popularity as a soil health metric among researchers, extension, and soil conservationists largely because it addresses both of these issues simultaneously.

Much like humans, soil microbes emit carbon dioxide (CO2) as they go about their daily metabolic activities. This CO2 comes from the metabolic breakdown of more complex forms of carbon—a process known as carbon mineralization—to access the energy harnessed in these complex molecules. As microbes do more metabolic “work”, they are mineralizing more carbon, increasing respiration rates and ultimately producing more CO2. When a soil is dry, most of this metabolic activity subsides. Rewetting redistributes carbon-rich material throughout the soil profile and kicks the microbial community into overdrive for 24-72 hours. This burst of mineralization can then be measured in a soil test lab to measure soil microbial activity. While this process is very straightforward in theory, many of the specifics surrounding its implementation are still unclear. These remaining uncertainties must be resolved in order to provide reliable information to growers wanting to integrate soil health into their management decisions.

A recent study involving UC Davis researchers Will Horwath and Jordon Wade sought to assess the reliability of the metric using soils from across the United States, with California soils being well represented. They found that different labs came up with very different numbers for mineralizable carbon for the same soils, with average differences of ±20% between labs. Traditional soil metrics, on the other hand, vary by as little as 1% between different labs. With so much variability in how it is measured, this metric is not yet ready to stand alone as a reliable component of a soil health toolbox. However, extensive local calibration and replication can help to overcome this obstacle until a larger-scale solution is found.

Research Details

This study was primarily interested in reliability within and between soil tests labs, although several methodological considerations were also examined. While the takeaways of this study are relatively straightforward, the intricacies of the methodology and inter-lab comparisons are a bit more involved.

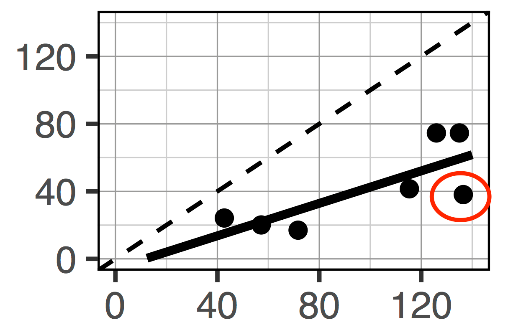

Between lab variation: After sending a set of 7 California soils to three commercial labs and running them here at UC Davis, a very low level of agreement was found. Although there was agreement in the general trend—the high values were generally higher from all labs and the lower values were low from all labs—the absolute values varied considerably. On the surface, this is a seemingly contradictory finding. However, the graph below helps to illustrate the point using data from two of the labs. The results have an acceptable R-squared value (R2=0.61), but the individual points differ from one another (greater similarity between the two labs would be indicated by points being closer to the 1:1 dotted line). For example, the point circled in red would get a value of 40 from one lab and a value of 140 from another; an increase of approximately 250%. When a separate set of 20 soils from across the US were retested in commercial labs, the average variation between the labs was ±20% for a given soil. This value was much higher than traditional lab metrics, which had variations as low as 1%.

Within lab variation: While variation between labs was considerable, variation within a lab can also occur. To better understand how much variation can be expected within a lab, yet another set of 73 soils was each run in triplicate in the same lab to see how much mineralizable carbon values can differ. The variations ranged from 0.5 to 84.4%, with an average of 18% variation between the three replicates. When this finding was investigated more, they found that the variation in mineralizable carbon was largely soil-specific. In practice, this means that for a “true” mineralizable carbon value of 100, you would expect an average variation in mineralizable carbon values ranging from 82 to 118. However, the range could also be 99-101 or 16-184, depending on the soil. The results from the study are unclear in explaining what could be driving this soil-to-soil variability in mineralizable carbon.

The large amount of uncertainty associated with measuring mineralizable carbon makes it challenging to use this metric to inform management decisions. Differences between soils could be obscured or artificially created by this uncertainty. Therefore, two recommendations should be considered when utilizing this soil health metric.

- Exercise caution when interpreting mineralizable carbon values. This could involve a relaxation of the traditional statistical thresholds of pphttps://dl.sciencesocieties.org/publications/sssaj/articles/80/5/1352).

- Only analyze the mean of several measurements from the same sample. i.e. use analytical replication. While replication will increase overall cost of analysis, it will also allow for greater confidence in the values obtained. The greater the number of replicates, the greater the certainty in the mineralizable carbon values returned. For additional information and to read the full study, please click here (https://dl.sciencesocieties.org/publications/sssaj/articles/82/1/243).

Comparison of data from two different labs

- Author: Aubrey Thompson

In June, the California Department of Food & Agriculture (CDFA) will release a call for grant proposals from farmers and ranchers to fund management practices that improve soil health. CDFA will provide almost $4 million in grants for practices such as mulch and compost addition, conservation plantings, cover cropping, reduced tillage, and more, and another $3 million for demonstration projects. The funding comes from the state's cap-and-trade program and must be invested in activities that reduce greenhouse gas emissions and/or sequester carbon by improving soil health.

Join us on this webinar to get a preview of the program. The webinar is offered in partnership with the UC Sustainable Agriculture Research and Education Program (SAREP) and California Climate & Agriculture Network (CalCAN).

Topics that will be covered:

•What policies led up to the launch of the Healthy Soils Initiative?

•What practices will be incentivized?

•What will be required of growers in applying?

•What is the timeline and next steps?

In addition, webinar participants will hear about UC SAREP's work related to healthy soils.

Details:

Tuesday, May 30

10:00am-11:00am

Register for the webinar here:

https://zoom.us/meeting/register/0f72f8e741098877cde7dc3c8da9331e

With questions about the webinar, contact Aubrey Thompson at UC SAREP - abthompson@ucdavis.edu

With questions about the Healthy Soils Initiative, contact Renata Brillinger at CalCAN - renata@calclimateag.org

- Author: Yoni Cooperman

Alfalfa has a long and storied history in California agriculture. First introduced in the state during the gold rush of 1849-1850, California now leads the nation in alfalfa production. Between 2013-2015, an average of 815,000 acres of alfalfa were harvested in the state. Statewide alfalfa yields increased to 5,451,000 tons in 2015 and California now accounts for over 9% of total U.S. production. Alfalfa serves as an important source of hay while also proving useful as a ‘green manure' that can provide nitrogen for the following crops. That being said, alfalfa has relatively high water demands, a particularly vexing issue due to the current statewide drought. While the majority of alfalfa cropping systems utilize flood irrigation, the potential for utilizing sub-surface drip irrigation (SDDI) has become an attractive option to many growers seeking to reduce water usage. The type of irrigation utilized can also influence nitrous oxide (N2O) emissions. N2O is a potent greenhouse gas 300 times more powerful than carbon dioxide in warming the planet. Agriculture accounts for more than 60% of statewide N2O emissions from human activity. It has been shown that SSDI reliably reduces N2O emissions in other cropping systems.

UC Davis Land, Air, and Water Resources graduate student Ryan Byrnes recently completed a year-long study investigating the potential for SSDI to mitigate N2O emissions from alfalfa production. The study compared rates of N2O production in side by side alfalfa systems, one utilizing check flood irrigation while the other had a SSDI system installed. “We found that yearly emissions were significantly reduced by the adoption of SSDI.” Sustained soil moisture drives N2O emissions, and he noted that “SSDI keeps the soil surface dry, potentially reducing N2O emissions.” While large bursts of N2O emissions are often observed following the rewetting of dry soil, Ryan pointed out that “the pulse emissions in the SSDI plots was lower than in the flood plots.” The emissions following the first rains were not high enough in the SSDI plots to offset the reductions observed through the rest of the season. While additional studies are needed to draw any definitive conclusions, the findings of this study are encouraging. Stay tuned to this space for reports on future studies investigating the potential for SSDI to mitigate statewide N2O emissions.

- Author: Yoni Cooperman

Sequestering Carbon in the Soil Using Biochar

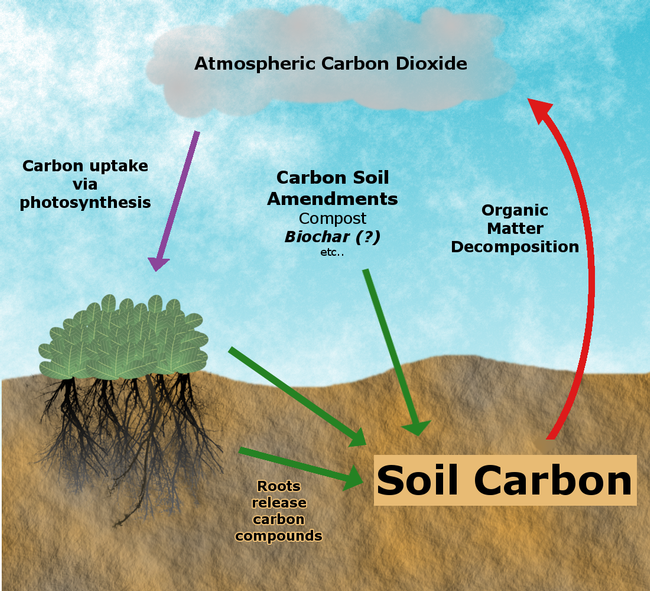

Soils store three times more carbon than exists in the atmosphere. Plants absorb atmospheric carbon during photosynthesis, so the return of plant residues into the soil contributing to soil carbon. While much of this carbon ultimately returns to the atmosphere as soil microbes decompose carbon based plant biomass and release carbon dioxide, soil carbon stores can increase if the rate of carbon inputs exceeds the rate of microbial decomposition. Carbon sequestration refers to this process of storing carbon in soil organic matter and thus removing carbon dioxide from the atmosphere.

Biochar is produced from burning organic material at high temperatures with little to no oxygen availability. The potential of utilizing biochar to sequester carbon in the soil has received considerable research attention in recent years as part of efforts to develop climate smart agricultural practices. As the majority of biochar is carbon (70-80%) it can potentially contribute more carbon than plant residue (approximately 40% carbon) of similar mass. Furthermore, around 60% of this biochar organic carbon is of high stability and therefore resists decomposition more-so than plant material that has not be processed into biochar. That being said, many questions remain as to the effectiveness of biochar application in sequestering carbon.

(For more information about biochar, check out our recent blog post)

Persistence of Biochar Carbon in Soil

While biochar does contain high levels of carbon, there remains uncertainty as to how long that carbon will persist in the soil following application. The inherent characteristics of the biochar--as dictated by feedstock and pyrolysis conditions--interact with climatic conditions such a precipitation and temperature to influence how long biochar carbon remains stored in the soil. Recent studies suggest that shorter pyrolysis times and higher pyrolysis temperatures make for more recalcitrant biochar (i.e. it persists for longer periods in the soil). However, there are trade-offs involved as these pyrolysis conditions produce less biochar per unit feedstock. As is so often the case, soil texture plays a key role in determining the persistence of biochar carbon. Biochar becomes stabilized in the soil by interacting with soil particles. Clay particles have more surface area for biochar to interact with and are therefore more effective at stabilizing biochar.

The Priming Effect

A number of studies have observed an increase in the rate of organic matter decomposition following biochar application. This so-called “priming effect” complicates any efforts to sequester carbon as this increase in microbial activity could result in decomposition rates exceeding carbon input rates (see figure above). While the exact mechanism responsible for this effect has not been conclusively identified, it may result from the stimulation of microbial activity as microbes utilize carbon and nitrogen present in biochar.

Biochar remains a hot topic with regards to increasing soil carbon stores and helping fight climate change. However, many questions remain before definitive conclusions about what conditions allow for biochar to positively contribute to soil carbon sequestration.

Sources

Ontl, T. A. & Schulte, L. A. (2012) Soil Carbon Storage. Nature Education Knowledge 3(10):35

Lal, R. (2016). Biochar and Soil Carbon Sequestration. Agricultural and Environmental Applications of Biochar: Advances and Barriers. M. Guo, Z. He and S. M. Uchimiya. Madison, WI, Soil Science Society of America, Inc.: 175-198.

Stewart, C. E., et al. (2013). "Co-generated fast pyrolysis biochar mitigates green-house gas emissions and increases carbon sequestration in temperate soils." GCB Bioenergy 5(2): 153-164.

Yang, F., et al. (2016). "The Interfacial Behavior between Biochar and Soil Minerals and Its Effect on Biochar Stability." Environmental Science & Technology 50(5): 2264-2271.

- Author: Yoni Cooperman

- Contributor: Deirdre Griffin

In the on-going quest to develop sustainable agricultural practices, growers are looking for new and inventive technologies. In this blog post, we'll focus on biochar, one such technology that has been a focus of intense research in recent years. Biochar is produced by burning organic material at extreme temperatures as high as 1600° F with little to no oxygen available. Oftentimes biochar is a by-product of energy production, but it can also be produced solely to be used as a soil amendment.

There's a few reasons growers might incorporate biochar into their cropping systems. Biochars' high surface area allows it to act as a reservoir of water while increasing the retention of nutrients such as calcium, magnesium, and ammonium. This is especially useful in more sandy soils with low cation exchange capacity. Biochar can also serve as a liming agent to increase soil pH, which increases nutrient availability in acidic soils. Additionally, biochars with high ash content can contain calcium and potassium that plants can use. Biochar inputs are also high in carbon. Stay tuned to this blog for another post highlighting the potential for biochar to increase soil carbon storage.

Feedstock – the organic material used to produce biochar – varies widely. Common feedstocks include wood chips, nut shells, and grasses. In California nut shells stand as a potentially useful source of feedstock due to the large nut industry. Biochar can also be produced from manures. Both feedstock and production temperature influence how biochar will behave in the soil. Dr. Sanjai Parikh's lab at the University of California, Davis has developed a biochar database that includes both of these characteristics.

Initial interest in biochar stemmed from the study of the Terra Preta soils in South America. These generally low fertility, acidic oxisols were able to sustain higher productivity than nearby non-Terra Preta soils while also accumulating organic matter. One of the reasons for this productivity was the addition of charcoal by indigenous farmers thousands of years ago. The hope was to mimic this in a modern agricultural setting.

Like most agricultural practices, biochars present some challenges for effective integration into a cropping system. Like compost or manure, it can be difficult to predict when nutrients from biochar will become plant available or how a char will interact with a particular soil. Different soil types require different rates of biochar application. For example, a clay loam would require more biochar to increase pH when compared to a sandy soil as a result of the clay loam's higher buffering capacity (see figure below).

UC Davis Soils and Biogeochemistry graduate student Deirdre Griffin is researching how soil microbes respond to biochar additions. She explains that “while biochars can sometimes serve as a source of labile carbon to spur microbial activity, some chars can give off inhibitory compounds that may reduce microbial activity.” In particular, she is looking at whether biochars with high sorption capacity (i.e. the ability to hold on to compounds in the soil) can interfere with signaling between legumes and soil bacteria that fix nitrogen and make it available to plants. She is careful to note that “others have found biochars to increase nodulation in legumes.”

All in all, “the leaders in the field recognize that while there are many benefits of biochar, there can also be negative impacts…There was a burst of [research] excitement followed by some backlash, and now things are starting to even out.” Biochar can serve as a tool for sustainable production systems, but it isn't appropriate for every situation. Continued research will illuminate what types of biochar are suitable for different soils.