Posts Tagged: Lidar

Using Lidar to map forest structure and characterize wildlife habitat

UC scientists with the Sierra Nevada Adaptive Management Project (SNAMP) are investigating the uses of Lidar (light detection and ranging) in providing detailed information on how forest habitat is affected by fuels management treatments across a large landscape. Mapping forest structure can illustrate how a forest influences surface hydrology, provides for wildlife and how a forest might burn given certain weather and wind patterns. This research is proving useful in wildlife studies, water quantity and fire modeling and forest planning.

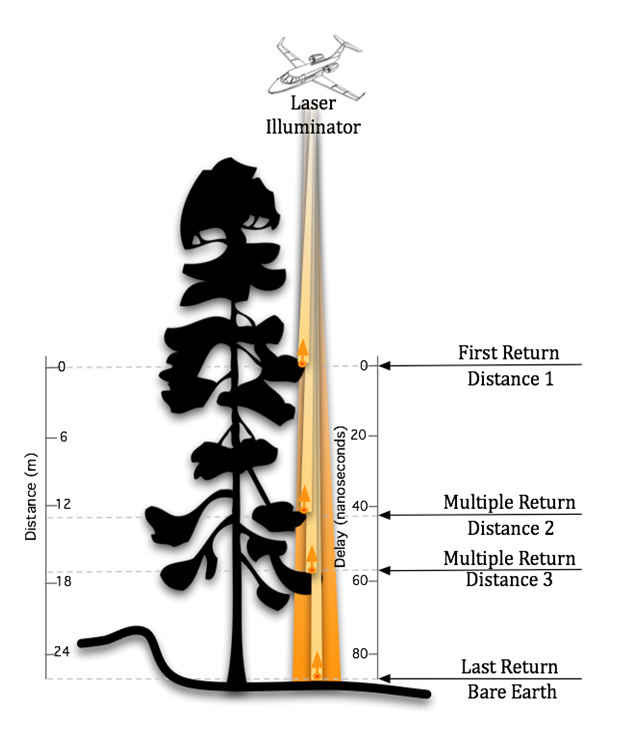

Airborne lidar works by emitting a light pulse from an emitter onboard a plane towards a ground target. A portion of the light is reflected back to the airborne sensor and recorded. The time between sending out the light pulse and receiving it back is converted into distance.

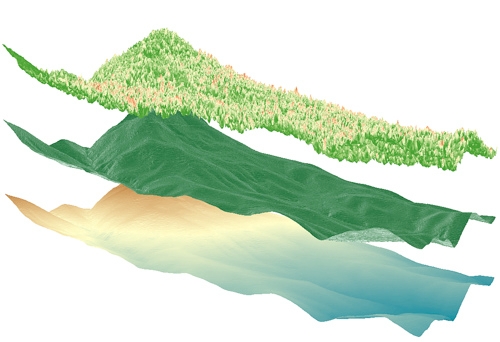

This data, along with GPS information on the aircraft’s exact position and orientation, allows scientists to calculate the height of a target and create 3D maps of the forest vegetation and the bare ground. Raw lidar data is first received as a ‘point cloud’ which consists of millions of points from which meaningful and detailed measurements can be extracted.

Some of the current SNAMP research focuses on developing new methods to detect and delineate individual trees from the point cloud even in complex mixed conifer forests and rugged terrain. The SNAMP spatial team recently published a method that maps all the trees in the forest with 90 percent accuracy. The individual tree identification method identifies trees from the tallest to the shortest and is especially useful in mapping wildlife habitat. For example, the SNAMP spatial and California spotted owl teams collaborated using lidar to map large trees and canopy cover in spotted owl territories. These areas are often hard to identify and map over large areas. Lidar data was used to measure the number, density and pattern of large trees in areas used by the spotted owl. These kinds of data can be used to understand the forest habitat of other bird species and in Pacific fisher research. Below is a short video on the exploration of a lidar point cloud down to an individual tree:

Our goal is to provide methods to map the forest in detail, and thus to help forest managers anticipate the impacts of management decisions.

Images by SNAMP Spatial team/Kelly Labs, UC Berkeley