Posts Tagged: cloud

Esri User Conference 2017 – Day 2, the first days of talks and technical sessions

Of all the technical session and talks I went to today the topic that was the most exciting was the “ArcGIS Maps for Adobe Creative Cloud”. The plugin for Adobe Creative Cloud is a bridge between ArcGIS and Adobe Illustrator and Photoshop. This plugin allows cartographers and graphics designers to import vector and raster data directly from ArcGIS Online and from shapefiles into Illustrator and Photoshop. Once these data are in Illustrator and Photoshop the data can be manipulated and styled using the native tools in Illustrator and Photoshop.

This tool fills a need that cartographers have wanted filled for many years. I foresee these tools as being very important to allow graphics designers within our organization to extend the spatial data that we have created to publications and other materials that UCANR produce.

To download and start using these tools you will need access to Adobe Creative Cloud https://exchange.adobe.com/addons/products/16913 and an ArcGIS for Organizations account http://www.arcgis.com. If you are a member of the UCANR network and do not have access to ArcGIS Online fill out the following form and we will help you gain access to the ArcGIS Online http://igis.ucanr.edu/resources/esri_software/.

Notes and stray thoughts:

- ArcGIS Pro 2.0 and ArcGIS 10.5.1 Released in advance of the User Conference this year. To see what is new in these new releases go to http://pro.arcgis.com/en/pro-app/get-started/whats-new-in-arcgis-pro.htm for ArcGIS Pro 2.0 and http://desktop.arcgis.com/en/arcmap/latest/get-started/introduction/whats-new-in-arcgis.htm#ESRI_SECTION1_76DF146740D047C78D38DC5FFF917CCD for ArcGIS 10.5.1.

- ArcGIS Pro and ArcGIS will be have new releases every 6 months in to the future.

- As always, the ESRI User Conference is a well done production with professional presentation and branding.

- Being at the ESRI Conference is always a nice opportunity to see old friends in the GIS community.

More to Come Tomorrow

Day 2 Wrap Up from the NEON Data Institute 2017

First of all, Pearl Street Mall is just as lovely as I remember, but OMG it is so crowded, with so many new stores and chains. Still, good food, good views, hot weather, lovely walk.

Welcome to Day 2! http://neondataskills.org/data-institute-17/day2/

Our morning session focused on reproducibility and workflows with the great Naupaka Zimmerman. Remember the characteristics of reproducibility - organization, automation, documentation, and dissemination. We focused on organization, and spent an enjoyable hour sorting through an example messy directory of misc data files and code. The directory looked a bit like many of my directories. Lesson learned. We then moved to working with new data and git to reinforce yesterday's lessons. Git was super confusing to me 2 weeks ago, but now I think I love it. We also went back and forth between Jupyter and python stand alone scripts, and abstracted variables, and lo and behold I got my script to run.

The afternoon focused on Lidar (yay!) and prior to coding we talked about discrete and waveform data and collection, and the opentopography (http://www.opentopography.org/) project with Benjamin Gross. The opentopography talk was really interesting. They are not just a data distributor any more, they also provide a HPC framework (mostly TauDEM for now) on their servers at SDSC (http://www.sdsc.edu/). They are going to roll out a user-initiated HPC functionality soon, so stay tuned for their new "pluggable assets" program. This is well worth checking into. We also spent some time live coding with Python with Bridget Hass working with a CHM from the SERC site in California, and had a nerve-wracking code challenge to wrap up the day.

Fun additional take-home messages/resources:

- ISO International standard for dates = YYYY-MM-DD

- Missing values in R = NA, in Python = -9999

- For cleaning messy data - check out OpenRefine - a FOS tool for cleaning messy data http://openrefine.org/

- Excel is cray-cray, best practices for spreadsheets: http://www.datacarpentry.org/spreadsheet-ecology-lesson/

- Morpho (from DataOne) to enter metadata: https://www.dataone.org/software-tools/morpho

- Pay attention to file size with your git repositories - check out: https://git-lfs.github.com/. Git is good for things you do with your hands (like code), not for large data.

- Funny how many food metaphors are used in tech teaching: APIs as a menu in a restaurant; git add vs git commit as a grocery cart before and after purchase; finding GIS data is sometimes like shopping for ingredients in a specialty grocery store (that one is mine)...

- Markdown renderer: http://dillinger.io/

- MIT License, like Creative Commons for code: https://opensource.org/licenses/MIT

- "Jupyter" means it runs with Julia, Python & R, who knew?

- There is a new project called "Feather" that allows compatibility between python and R: https://blog.rstudio.org/2016/03/29/feather/

- All the NEON airborne data can be found here: http://www.neonscience.org/data/airborne-data

- Information on the TIFF specification and TIFF tags here: http://awaresystems.be/, however their TIFF Tag Viewer is only for windows.

Thanks for everyone today! Megan Jones (our fearless leader), Naupaka Zimmerman (Reproducibility), Tristan Goulden (Discrete Lidar), Keith Krause (Waveform Lidar), Benjamin Gross (OpenTopography), Bridget Hass (coding lidar products).

Our home for the week

Cloud-based raster processors out there

Hi all,

Just trying to get my head around some of the new big raster processors out there, in addition of course to Google Earth Engine. Bear with me (bare?) while I sort through these. Thanks for raster sleuth Stefania Di Tomasso for the leg work.

1. Geotrellis (https://geotrellis.io/)

Geotrellis is a Scala-based raster processing engine, and it is one of the first geospatial libraries on Spark. Geotrellis is able to process big datasets. Users can interact with geospatial data and see results in real time in an interactive web application (for regional, statewide dataset). For larger raster datasets (eg. US NED). GeoTrellis performs fast batch processing using Akka clustering to distribute data across the cluster. GeoTrellis was designed to solve three core problems, with a focus on raster processing:

- Creating scalable, high performance geoprocessing web services;

- Creating distributed geoprocessing services that can act on large data sets; and

- Parallelizing geoprocessing operations to take full advantage of multi-core architecture.

Features:

- GeoTrellis is designed to help a developer create simple, standard REST services that return the results of geoprocessing models.

- GeoTrellis will automatically parallelize and optimize your geoprocessing models where possible.

- In the spirit of the object-functional style of Scala, it is easy to both create new operations and compose new operations with existing operations.

2. GeoPySpark - in synthesis GeoTrellis for Python community

Geopyspark provides python bindings for working with geospatial data on PySpark (PySpark is the Python API for Spark). Spark is open source processing engine originally developed at UC Berkeley in 2009. GeoPySpark makes Geotrellis (https://geotrellis.io/) accessible to the python community. Scala is a difficult language so they have created this Python library.

3. RasterFoundry

ESRI @ GIF Open GeoDev Hacker Lab

We had a great day today exploring ESRI open tools in the GIF. We had a full class of 30 participants, and two great ESRI instructors (leaders? evangelists?) John Garvois and Allan Laframboise, and we worked through a range of great online mapping (data, design, analysis, and 3D) examples in the morning, and focused on using ESRI Leaflet API in the afternoon. Here are some of the key resources out there.

- Main ESRI Open Information: http://www.esri.com/software/open

- Slide deck from today: http://slides.com/alaframboise/geodev-hackerlabs#/

- Afternoon example using Leaflet: http://esri.github.io/esri-leaflet/

- ESRI's developer toolkits: https://developers.arcgis.com/en/, including

- ESRI's javascript API: https://developers.arcgis.com/javascript/beta/

Great Stuff! Thanks Allan and John

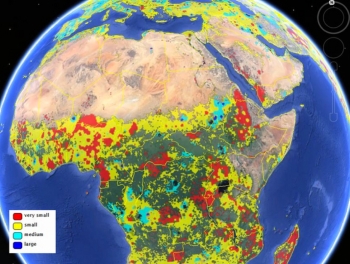

Croudsourced view of global agriculture: mapping farm size around the world

The researchers built the cropland database by combining information from several sources, such as satellite images, regional maps, video and geotagged photos, which were shared with them by groups around the world. Combining all that information would be an almost-impossible task for a handful of scientists to take on, so the team turned the project into a crowdsourced, online game. Volunteers logged into "Cropland Capture" on a computer or a phone and determined whether an image contained cropland or not. Participants were entered into weekly prize drawings.