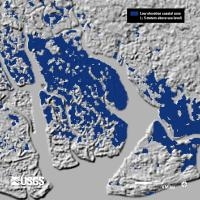

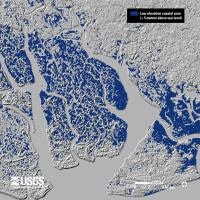

Just in time for class on topography and rasters tomorrow: new high res shuttle DEM data is being released for Africa. The image above shows the Niger River Delta in 90m res, 30m res, and landsat.

From the press release: In Africa, accurate elevation (topographic) data are vital for pursuing a variety of climate-related studies that include modeling predicted wildlife habitat change; promoting public health in the form of warning systems for geography and climate-related diseases (e.g. malaria, dengue fever, Rift Valley fever); and monitoring sea level rise in critical deltas and population centers, to name just a few of many possible applications of elevation data.

On September 23, the National Aeronautics and Space Administration (NASA), the National Geospatial-Intelligence Agency (NGA), and the U.S. Geological Survey (USGS, a bureau of the U.S. Department of the Interior) released a collection of higher-resolution (more detailed) elevation datasets for Africa. The datasets were released following the President’s commitment at the United Nations to provide assistance for global efforts to combat climate change. The broad availability of more detailed elevation data across most of the African continent through the Shuttle Radar Topography Mission (SRTM) will improve baseline information that is crucial to investigating the impacts of climate change on African communities.

Enhanced elevation datasets covering remaining continents and regions will be made available within one year, with the next release of data focusing on Latin America and the Caribbean region. Until now, elevation data for the continent of Africa were freely available to the public only at 90-meter resolution. The datasets being released today and during the course of the next year resolve to 30-meters and will be used worldwide to improve environmental monitoring, climate change research, and local decision support. These SRTM-derived data, which have been extensively reviewed by relevant government agencies and deemed suitable for public release, are being made available via a user-friendly interface on USGS’s Earth Explorer website.

Nice slider comparing the 90m to the 30m data here.

- Author: Shane Feirer

The California Climate Commons (http://climate.calcommons.org/) will be hosting a new version of the California Basin Characterization Model, sometimes called the BCM or Flint data (after the modelers, Lorraine and Alan Flint). The new version of the BCM is currently being processed and is planned to be hosted by California Climate Commons in the next month. Lorraine Flint discusses what's new in this dataset in the following Forum. California Climate Commons will be hosting the California model outputs initially and a Nevada dataset will be added later this year. This new dataset features 18 modeled futures; you can read the California Climate Commons article called "Why So Many Climate Models?" to find out more about them as well as learn about how to work with the large number of climate models that are available.

Both the NASS Cropland Data Layer (CDL) and the National Land Cover Dataset (NLCD) released new versions in early 2014. Links for download are here:

- CDL:

- Users NASS can download the 2013, 2012, 2011, 2010, 2009, 2008 CDLs; the 2013 confidence layer and the 2013 cultivated layer from National Downloads (file sizes > 2gb).

- View their latest presentations in .PDF format.

- NLCD:

- The National Land Cover Database (NLCD 2011) is made available to the public by the U.S. Geological Survey and partners.

An interesting position piece on the appropriate uses of big data for climate resilience. The author, Amy Luers, points out three opportunities and three risks.

She sums up:

"The big data revolution is upon us. How this will contribute to the resilience of human and natural systems remains to be seen. Ultimately, it will depend on what trade-offs we are willing to make. For example, are we willing to compromise some individual privacy for increased community resilience, or the ecological systems on which they depend?—If so, how much, and under what circumstances?"

Read more from this interesting article here.

In 1998 Al Gore made his now famous speech entitled The Digital Earth: Understanding our planet in the 21st Century. He described the possibilities and need for the development of a new concept in earth science, communication and society. He envisioned technology that would allow us "to capture, store, process and display an unprecedented amount of information about our planet and a wide variety of environmental and cultural phenomena.” From the vantage point of our hyper-geo-emersed lifestyle today his description of this Digital Earth is prescient yet rather cumbersome:

"Imagine, for example, a young child going to a Digital Earth exhibit at a local museum. After donning a head-mounted display, she sees Earth as it appears from space. Using a data glove, she zooms in, using higher and higher levels of resolution, to see continents, then regions, countries, cities, and finally individual houses, trees, and other natural and man-made objects. Having found an area of the planet she is interested in exploring, she takes the equivalent of a "magic carpet ride" through a 3-D visualization of the terrain.”

He said: "Although this scenario may seem like science fiction, most of the technologies and capabilities that would be required to build a Digital Earth are either here or under development. Of course, the capabilities of a Digital Earth will continue to evolve over time. What we will be able to do in 2005 will look primitive compared to the Digital Earth of the year 2020. In 1998, the necessary technologies were: Computational Science, Mass Storage, Satellite Imagery, Broadband networks, Interoperability, and Metadata.

He anticipated change: "Of course, further technological progress is needed to realize the full potential of the Digital Earth, especially in areas such as automatic interpretation of imagery, the fusion of data from multiple sources, and intelligent agents that could find and link information on the Web about a particular spot on the planet. But enough of the pieces are in place right now to warrant proceeding with this exciting initiative.”