- Author: Priyanka Vyas

- Editor: Andy Lyons

- Editor: Sean D Hogan

- Editor: Genoa Starrs

We bring you stories from DroneCamp 2024, now in its 8th year and wrapping up a successful week at CSU Monterey Bay.

“The goals of a prescribed burn are to reduce fuel load as much as you can, improve habitat and work with private landowners. I am especially excited to learn about drones equipped with thermal cameras to study fuel moisture and study ignition pattern of fires”, she said. Barb is hoping to learn object detection methods that can be used to process the imagery collected from drones and facilitate prescribed burns.

Hosted at California State University Monterey Bay Campus, this year's DroneCamp, now in its eighth year, leveraged upon the combined expertise of several UCs, CSU, and community colleges. It pooled together 29 instructors from CSU Monterey Bay, UC ANR, UC Merced, UC Santa Cruz, UC Berkeley, UC Los Angeles, Lakeland Community College, and American River College. The instructors bring deep expertise in drone hardware, flying, and processing imagery collected through drones.

80 participants , mostly from California , attend the in-person event in Montery, with another 43 participants who attended virtually. While the virtual participants get access to almost all the sessions that in-person participants get, they miss the most exciting part --- which is learning to fly drones.

“After attending the DroneCamp, I can help farmers collect and report their data better to the funders. I am excited to work with farmers to assess changes in biomass production over time to see how well the plants grew after the grant. We can also take pictures of the field to see whether all the plants are getting uniform water. Depending on where the plants are in relation to the pumps, some may get too much or too little water. We can use drones to see whether the irrigation system is uniform.”

Another application Ana is interested in is assessing the effectiveness of cover crops on farmland. Cover crops are crops planted between cash crops that provide various benefits and keep the land from being fallow. “We can use drones to compare different cover crops and how they perform to control weeds in fields”.

When asked how confident Ana feels about undertaking projects independently using drones in her work, she said, “DroneCamp gave me the connections I need to reach out to experts when I work on my own project”.

After attending DroneCamp, he feels he has attained basic skills and knowledge needed to fly a drone over an orchard, and ideas on how to use drone data to assess crop response to irrigation in a field.”

Tobias says, although satellite-based remote sensing is currently used to estimate how much water is by used by crops, there are still some questions regarding their accuracy. These are questions that researchers in the field are working on answering.

He notes: “Considering drones work on a field scale, it could help potentially refine the accuracy that we do not get from other remote sensing platforms.

DroneCamp also drew students from various universities who have taken some GIS training at their academic institutions, but not in mapping with drones. Manjot Singh, a student in plant science and agriculture from Fresno State University says, “I can get introductory and advanced GIS courses at my university, but there is no drone related course being offered”. May be this will help me if I pursue a PhD or make me marketable in future jobs”.

- Author: Andrew J Lyons

- Contact: Sean D Hogan

June 24-28, 2024

CSU Monterey Bay

(plus virtual open)

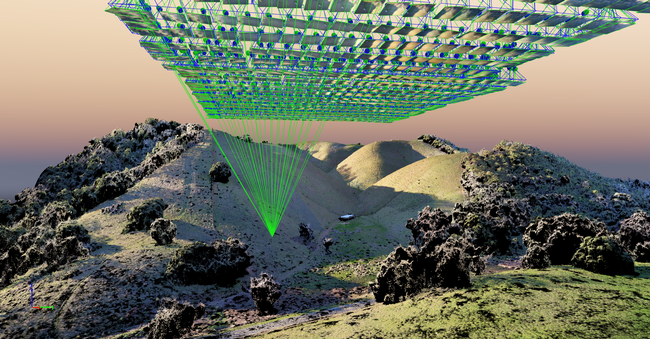

DroneCamp is a premiere five-day training program that covers everything you need to know to use drones for mapping and data collection.

Curriculum at a Glance

- equipment

- safety & regulations

- flight planning

- manual & autonomous flight

- photogrammetry

- hands-on data processing with Pix4D, OpenDroneMap & ArcGIS Pro

- data analysis & visualization

- research talks & use cases

- educator track - new for 2024!

Now in its eighth year, Drone Camp 2024 will once again provide a unique drone training opportunity designed for a wide range of skill levels and interests, from complete beginners with little to no experience in drone technology, to intermediate users who want to learn more advanced data processing and analysis.

DroneCamp started out as an IGIS workshop, but has evolved into a highly collaborative program with instructors coming from 8 campuses including UC ANR, UC Merced, UC Santa Cruz, CSU Monterey Bay, UC Davis, UC Berkeley, UC Santa Barbara, Lakeland College, and American River College.

DroneCamp 2024 will be held in-person at CSU Monterey Bay June 24-28, 2024. Scholarships to cover the registration fee are available for all ANR academics and staff - but you must apply soon! More info available at DroneCampCA.org.

|

|

|

|

- Author: Benjamin Satzman

- Author: Andy Lyons

Maps for Cooperative Extension

Settling into a new county can be challenging. One has to figure out where things are, how to get from A to B, and where the greatest needs and opportunities are.

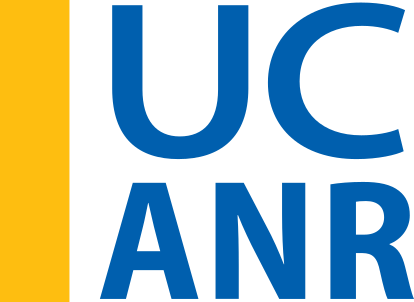

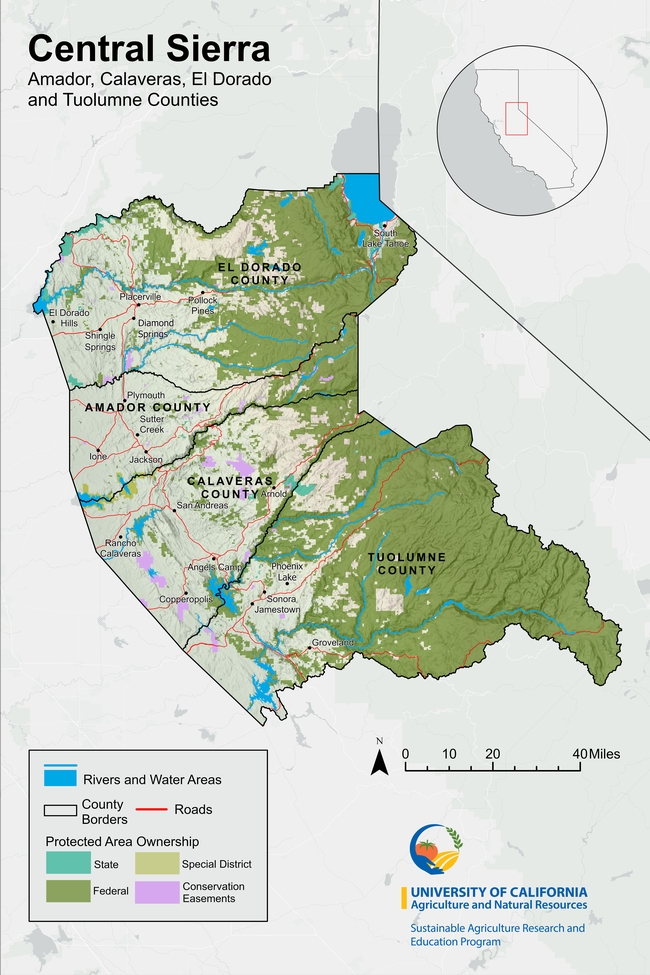

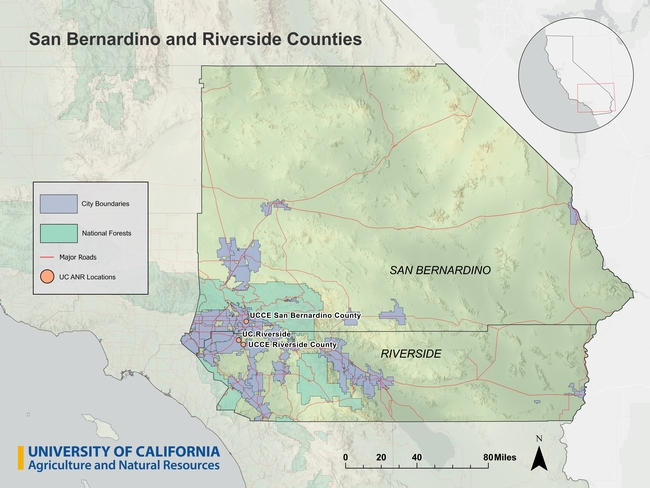

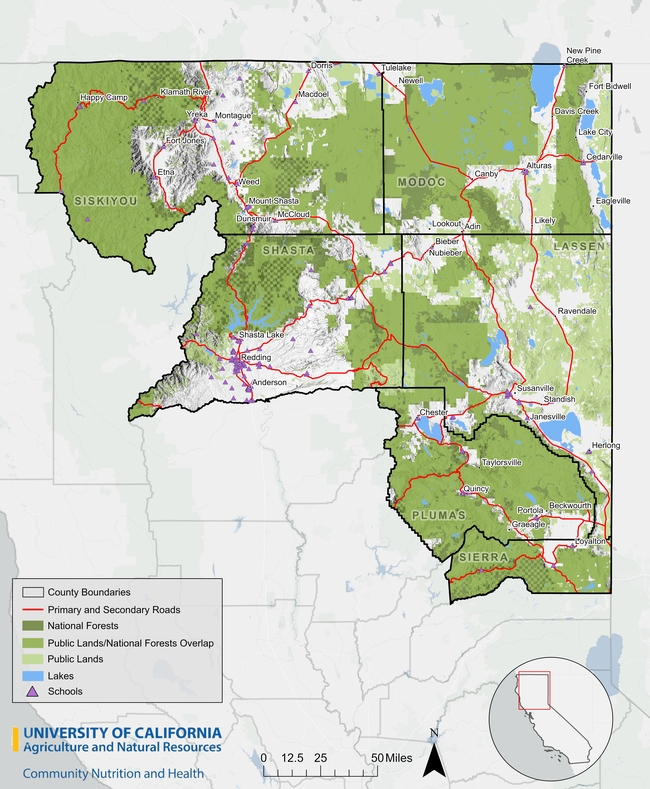

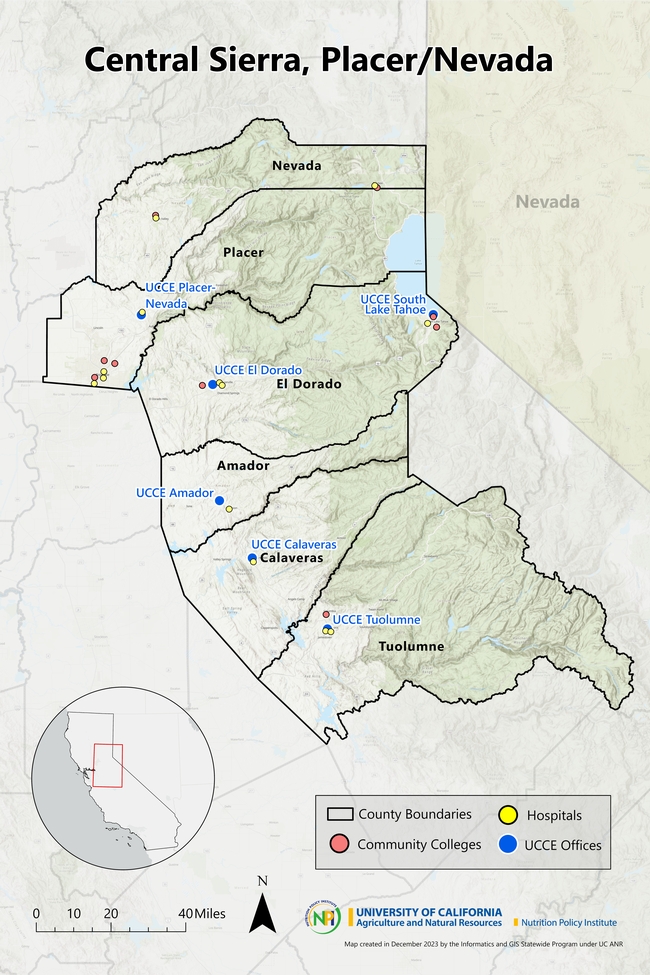

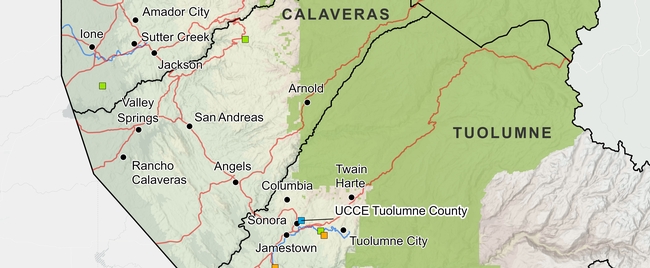

To help new Cooperative Extension Advisors navigate these waters, IGIS in the past year has booted up a new service called Maps for CE. The goal of Maps for CE, available to CE Advisors and other academics less than two years into their position, is to help our new colleagues get a handle on their coverage area (which often straddles multiple counties), thus building a strong foundation for their research and extension program. We do this by co-designing a custom wall map, web map, or both! To make the map useful for the researcher, reference layers are tailored to the researcher's specific program area and clientele. Some examples of maps produced from the new program below can be seen below (click on a map to see a larger version).

How it Works

To get started, the Advisor is sent a Google Form link where they select their county(s), background layers, additional points of interest, basemap, administrative boundaries and branding elements. The idea is to pick layers that are relevant to the programmatic focus - think of it like building a sandwich at a deli counter! A forestry Advisor may want to see national forest boundaries or land cover in the background, while a food systems advisor may want to include food distribution infrastructure on the map.

The menu of available layers is constantly evolving, but the current selection is shown below. If a desired layer is missing (e.g., a certain land-use layer), the order form includes an open ended question to describe the layer so we can try to find it.

Some Advisors get very excited when they see the layer menu, and request what we call a “kitchen sink” map. Maps with too many layers rarely turn out well, so we provide some guidelines about good cartography and follow-up when reviewing the drafts.

Background Layers

- Major roads

- Secondary roads

- City points/boundaries

- Unincorporated areas

Additional Points of Interest

- UCCE Offices

- Schools

- Hospitals

- Airports

- Railroads

- Other UC ANR Locations

Basemap Layers

- Hillshade topography

- Landfire vegetation

- Satellite imagery

- Any other basemap from ESRI's gallery

- No background image

Administrative Boundaries

- Census blocks

- Census tracts

- National forests

- Parcel boundaries (up to a minimum size)

- Protected areas

- School districts

- Zip code areas

- Air Space

Finally, the order form asks the user to provide a title for the map, indicate whether or not they want it printed and/or a companion web map, and select an output size. We can design either, but printing is up to the Advisor.

Design and Review

Once the information is submitted, an IGIS map-maker will go to work and assemble a rough draft of the map in ArcGIS Pro. Once the draft is complete, we will reach back out to the Advisor to schedule a time to chat about any changes needed and final touches they'd like to see. From that point we will continue to make iterations until it is exactly what they're looking for.

Researchers requesting paper maps will get a high quality PDF that they can take to their local print center for printing (we unfortunately don't have the capacity to print maps, but can help you identify a place to print locally). Clients also receive a copy of the ArcGIS Pro project so they can continue working on it on their own.

Even the Best Maps are Never Finished

Just like good research and extension programs, maps must evolve to keep up with changing needs. A newer version of a layer may come out, or the program may evolve to embrace a new clientele group. In any case, change is always expected.

Fortunately, GIS is well equipped to make updates, and we provide all kinds of support to ANR academics to continue their mapping journey. These include ArcGIS licenses for all ANR employees, workshops, Tech Notes, and Office Hours. We make maps with handover in mind, whether we are working on an ArcGIS Pro project or web map, so that the researcher can run with it and make variations for different needs and purposes.

Here are a few more examples of advisor maps we have produced in the past:

How do I get started?

Currently, this service is available at no cost to UC ANR CE Advisors and other academics who have been in their positions for less than two years. If you fit this bill and are interested, feel free to reach out to Ben Satzman or Andy Lyons for more info.

- Author: Shane T Feirer

IGIS is pleased to share that beginning January 22, our servers will be migrating to the new data center near Sacramento. As part of the move, our equipment is being upgraded to a more stable and secure platforms. This initiative is a continuation of the UCANR systems upgrade initiated that has been in the works for months and was initiated in December of last year. The goal is to further optimize our infrastructure to provide you with improved services and performance. We are tremendously grateful and applaud the hard work of CSIT and the vision of our CSIT Sree Mada for spearheading this change.

The process is expected to take a week. Unfortunately, many of the websites and mapping services we host will be offline during this period. Impacted sites and services include:

-

IGIS Website (custom scripts, content, and styles)

-

Backyard Poultry Survey (UC Davis Vet Med, English and Spanish versions)

-

Parcel Prioritization Tool (Altamont Open Space Advisory Committee, Alameda County)

-

Wildfire Fuel Mapper Multi-Parcel Map Tool

-

https://wildfirefuelmapper.org/Wildfire Fuel Mapper (not affected)

We apologize for this temporary disruption, but rest assured our team is dedicated to minimizing any disruptions and ensuring a smooth transition. For any questions or concerns, please contact IGIS Service Center Head Shane Feirer

- Author: Sean D Hogan

- Author: Brandon Stark

Specifically, Title 18, Subtitle B, Sections 1821-1833 will prohibit all Federal Agencies from purchasing any drone made in China, and will sunset all operations of Chinese drones in two (2) years. This will apply to all federal grants (Section 1825). Beginning two years after the enactment of this Act, except as provided in subsection (b) pertaining to the one exception of the National Oceanic and Atmospheric Administration (NOAA), no Federal funds awarded through a contract, grant, or cooperative agreement, or otherwise made available may be used to procure a covered unmanned aircraft system that is manufactured or assembled in China; or in connection with the operation of such a drone or unmanned aircraft system.

This legislation also requires the development of new federal wide policy on the purchase of drones (including grants/contracts). Likely this will be some version of the BlueSUAS list (vetted by DoD for security) that will be the new standard. We will have to wait and see how this unfolds.

For now, assuming the current NDAA compliance requirements, we only have the following platform recommendations for multispectral/photogrammetry operations using federal funds. However, hopefully new authorized technology will be forthcoming soon.

- eBee X (~$13k) fixed-wing, Cat 3 OOP, but requires laptop in field

- Wingtra Gen II (~$36k), VTOL with forward flight

- Freefly Astro (~$32k mapping package), multi-rotor, 1.5kg payload capacity

- Aurelia X6 Pro V2 (~$22k NDAA compliant package, no payload), 6kg payload capacity

For more information, please consider signing up for office hours with the UC ANR IGIS Statewide Program:

https://igis.ucanr.edu/Office_Hours/

or refer to resources through UC Unmanned Aircraft System Safety site:

https://www.ucop.edu/safety-and-loss-prevention/environmental/program-resources/unmanned-aircraft-systems-safety.html