First of all, Pearl Street Mall is just as lovely as I remember, but OMG it is so crowded, with so many new stores and chains. Still, good food, good views, hot weather, lovely walk.

Welcome to Day 2! http://neondataskills.org/data-institute-17/day2/

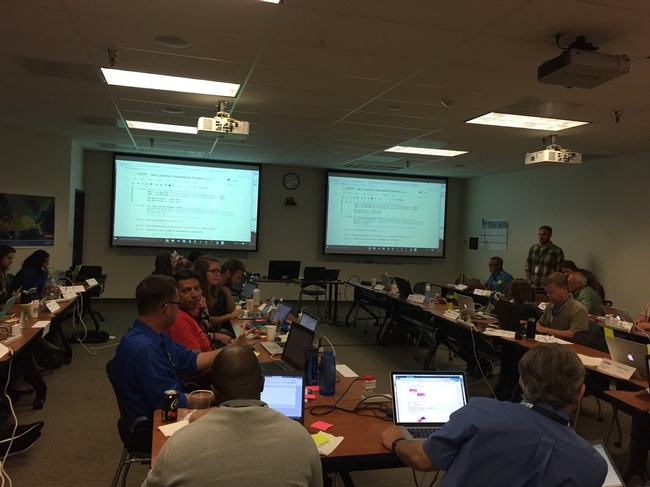

Our morning session focused on reproducibility and workflows with the great Naupaka Zimmerman. Remember the characteristics of reproducibility - organization, automation, documentation, and dissemination. We focused on organization, and spent an enjoyable hour sorting through an example messy directory of misc data files and code. The directory looked a bit like many of my directories. Lesson learned. We then moved to working with new data and git to reinforce yesterday's lessons. Git was super confusing to me 2 weeks ago, but now I think I love it. We also went back and forth between Jupyter and python stand alone scripts, and abstracted variables, and lo and behold I got my script to run.

The afternoon focused on Lidar (yay!) and prior to coding we talked about discrete and waveform data and collection, and the opentopography (http://www.opentopography.org/) project with Benjamin Gross. The opentopography talk was really interesting. They are not just a data distributor any more, they also provide a HPC framework (mostly TauDEM for now) on their servers at SDSC (http://www.sdsc.edu/). They are going to roll out a user-initiated HPC functionality soon, so stay tuned for their new "pluggable assets" program. This is well worth checking into. We also spent some time live coding with Python with Bridget Hass working with a CHM from the SERC site in California, and had a nerve-wracking code challenge to wrap up the day.

Fun additional take-home messages/resources:

- ISO International standard for dates = YYYY-MM-DD

- Missing values in R = NA, in Python = -9999

- For cleaning messy data - check out OpenRefine - a FOS tool for cleaning messy data http://openrefine.org/

- Excel is cray-cray, best practices for spreadsheets: http://www.datacarpentry.org/spreadsheet-ecology-lesson/

- Morpho (from DataOne) to enter metadata: https://www.dataone.org/software-tools/morpho

- Pay attention to file size with your git repositories - check out: https://git-lfs.github.com/. Git is good for things you do with your hands (like code), not for large data.

- Funny how many food metaphors are used in tech teaching: APIs as a menu in a restaurant; git add vs git commit as a grocery cart before and after purchase; finding GIS data is sometimes like shopping for ingredients in a specialty grocery store (that one is mine)...

- Markdown renderer: http://dillinger.io/

- MIT License, like Creative Commons for code: https://opensource.org/licenses/MIT

- "Jupyter" means it runs with Julia, Python & R, who knew?

- There is a new project called "Feather" that allows compatibility between python and R: https://blog.rstudio.org/2016/03/29/feather/

- All the NEON airborne data can be found here: http://www.neonscience.org/data/airborne-data

- Information on the TIFF specification and TIFF tags here: http://awaresystems.be/, however their TIFF Tag Viewer is only for windows.

Thanks for everyone today! Megan Jones (our fearless leader), Naupaka Zimmerman (Reproducibility), Tristan Goulden (Discrete Lidar), Keith Krause (Waveform Lidar), Benjamin Gross (OpenTopography), Bridget Hass (coding lidar products).

Our home for the week

I left Boulder 20 years ago on a wing and a prayer with a PhD in hand, overwhelmed with bittersweet emotions. I was sad to leave such a beautiful city, nervous about what was to come, but excited to start something new in North Carolina. My future was uncertain, and as I took off from DIA that final time I basically had Tom Petty's Free Fallin' and Learning to Fly on repeat on my walkman. Now I am back, and summer in Boulder is just as breathtaking as I remember it: clear blue skies, the stunning flatirons making a play at outshining the snow-dusted Rockies behind them, and crisp fragrant mountain breezes acting as my Madeleine. I'm back to visit the National Ecological Observatory Network (NEON) headquarters and attend their 2017 Data Institute, and re-invest in my skillset for open reproducible workflows in remote sensing.

Day 1 Wrap Up from the NEON Data Institute 2017

What a day! http://neondataskills.org/data-institute-17/day1/

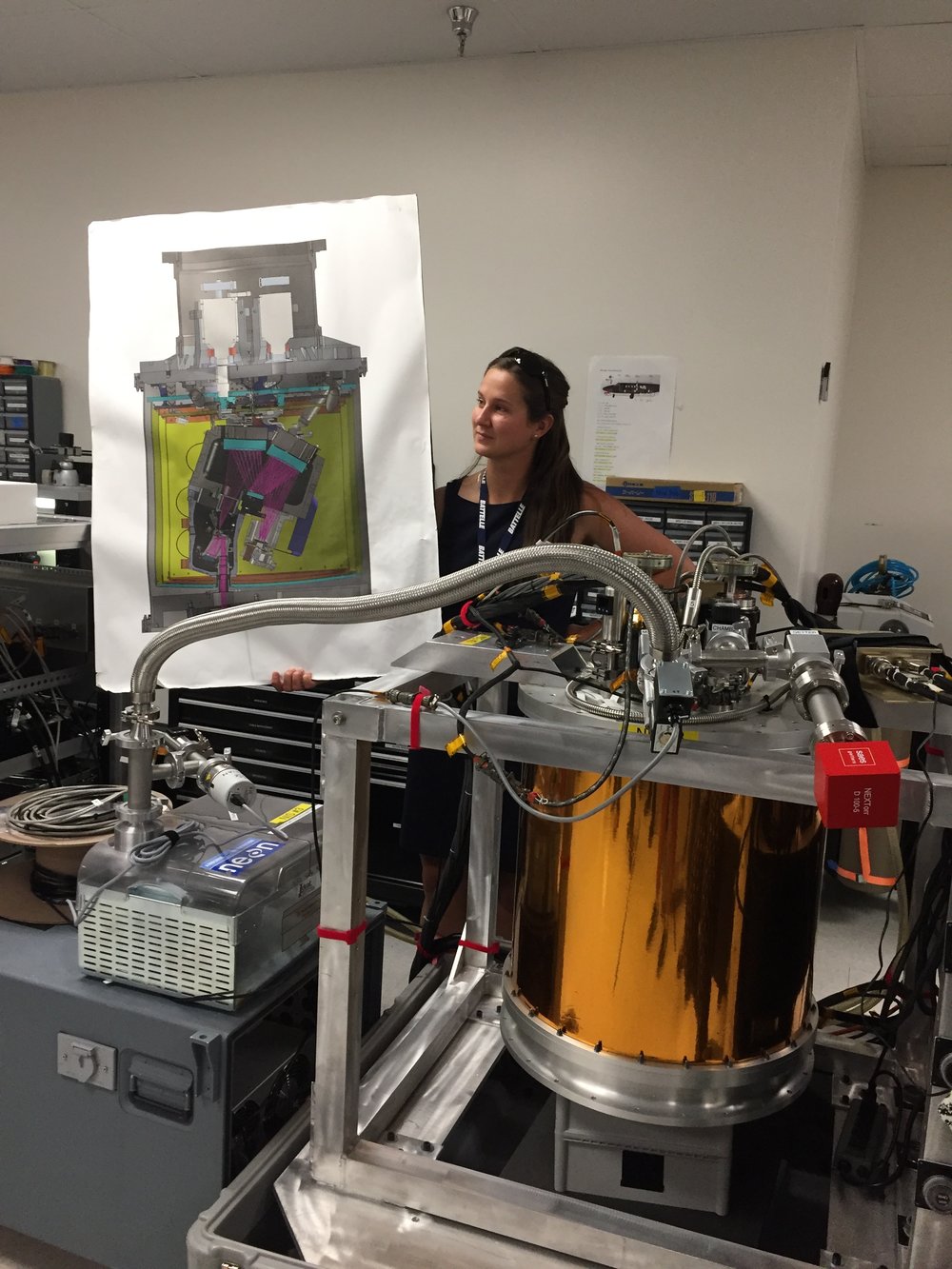

Attendees (about 30) included graduate students, old dogs (new tricks!) like me, and research scientists interested in developing reproducible workflows into their work. We are a mix of ages and genders. The morning session focused on learning about the NEON program (http://www.neonscience.org/): its purpose, sites, sensors, data, and protocols. NEON, funded by NSF and managed by Battelle, was conceived in 2004 and will go online for a 30-year mission providing free and open data on the drivers of and responses to ecological change starting in Jan 2018. NEON data comes from IS (instrumented systems), OS (observation systems), and RS (remote sensing). We focused on the Airborne Observation Platform (AOP) which uses 2, soon to be 3 aircraft, each with a payload of a hyperspectral sensor (from JPL, 426, 5nm bands (380-2510 nm), 1 mRad IFOV, 1 m res at 1000m AGL) and lidar (Optech and soon to be Riegl, discrete and waveform) sensors and a RGB camera (PhaseOne D8900). These sensors produce co-registered raw data, are processed at NEON headquarters into various levels of data products. Flights are planned to cover each NEON site once, timed to capture 90% or higher peak greenness, which is pretty complicated when distance and weather are taken into account. Pilots and techs are on the road and in the air from March through October collecting these data. Data is processed at headquarters.

In the afternoon session, we got through a fairly immersive dunk into Jupyter notebooks for exploring hyperspectral imagery in HDF5 format. We did exploration, band stacking, widgets, and vegetation indices. We closed with a fast discussion about TGF (The Git Flow): the way to store, share, control versions of your data and code to ensure reproducibility. We forked, cloned, committed, pushed, and pulled. Not much more to write about, but the whole day was awesome!

Fun additional take-home messages:

- NEON is amazing. I should build some class labs around NEON data, and NEON classroom training materials are available: http://www.neonscience.org/resources/data-tutorials

- Making participants do organized homework is necessary for complicated workshop content: http://neondataskills.org/workshop-event/NEON-Data-Insitute-2017

- HDF5 as an possible alternative data format for Lidar - holding both discrete and waveform

- NEON imagery data is FEDExed daily to headquarters after collected

- I am a crap python coder

- #whofallsbehindstaysbehind

- Tabs are my friend

Thanks to everyone today, including: Megan Jones (Main leader), Nathan Leisso (AOP), Bill Gallery (RGB camera), Ted Haberman (HDF5 format), David Hulslander (AOP), Claire Lunch (Data), Cove Sturtevant (Towers), Tristan Goulden (Hyperspectral), Bridget Hass (HDF5), Paul Gader, Naupaka Zimmerman (GitHub flow).

Today was WebGIS and the Geoweb (I know, we could do a whole semester), and rounded up some nice resources.

- Open Street Map interactions (from Vanessa):

- Here is Overpass Turbo, the OSM data filtering site. https://overpass-turbo.eu

- Here is Tag Info, where you can find the keys to query information on Overpass Turbo. https://taginfo.openstreetmap.org/

- Privacy (from Wyeth): Radiolab did a great piece on the intersection between GIS data and privacy.

- Link to the article: http://www.radiolab.org/story/update-eye-sky/ (this is the updated article after changes from the original broadcast in June 2015 [http://www.radiolab.org/story/eye-sky/] )

- Also, the company that developed from this: http://www.pss-1.com/

Register now for the March 2016 Spatial Data Science Bootcamp at UC Berkeley!

We live in a world where the importance and availability of spatial data are ever increasing. Today’s marketplace needs trained spatial data analysts who can:

- compile disparate data from multiple sources;

- use easily available and open technology for robust data analysis, sharing, and publication;

- apply core spatial analysis methods;

- and utilize visualization tools to communicate with project managers, the public, and other stakeholders.

To help meet this demand, International and Executive Programs (IEP) and the Geospatial Innovation Facility (GIF) are hosting a 3-day intensive Bootcamp on Spatial Data Science on March 23-25, 2016 at UC Berkeley.

With this Spatial Data Science Bootcamp for professionals, you will learn how to integrate modern Spatial Data Science techniques into your workflow through hands-on exercises that leverage today's latest open source and cloud/web-based technologies. We look forward to seeing you here!

To apply and for more information, please visit the Spatial Data Science Bootcamp website.

Limited space available. Application due on February 19th, 2016.

They found that the economic value of just one year of Landsat data far exceeds the multi-year total cost of building, launching, and managing Landsat satellites and sensors. This would be considered a stunning return on investment in any conventional business setting.

Full article by Jon Campbell, U.S. Geological Survey found here.