I left Boulder 20 years ago on a wing and a prayer with a PhD in hand, overwhelmed with bittersweet emotions. I was sad to leave such a beautiful city, nervous about what was to come, but excited to start something new in North Carolina. My future was uncertain, and as I took off from DIA that final time I basically had Tom Petty's Free Fallin' and Learning to Fly on repeat on my walkman. Now I am back, and summer in Boulder is just as breathtaking as I remember it: clear blue skies, the stunning flatirons making a play at outshining the snow-dusted Rockies behind them, and crisp fragrant mountain breezes acting as my Madeleine. I'm back to visit the National Ecological Observatory Network (NEON) headquarters and attend their 2017 Data Institute, and re-invest in my skillset for open reproducible workflows in remote sensing.

Day 1 Wrap Up from the NEON Data Institute 2017

What a day! http://neondataskills.org/data-institute-17/day1/

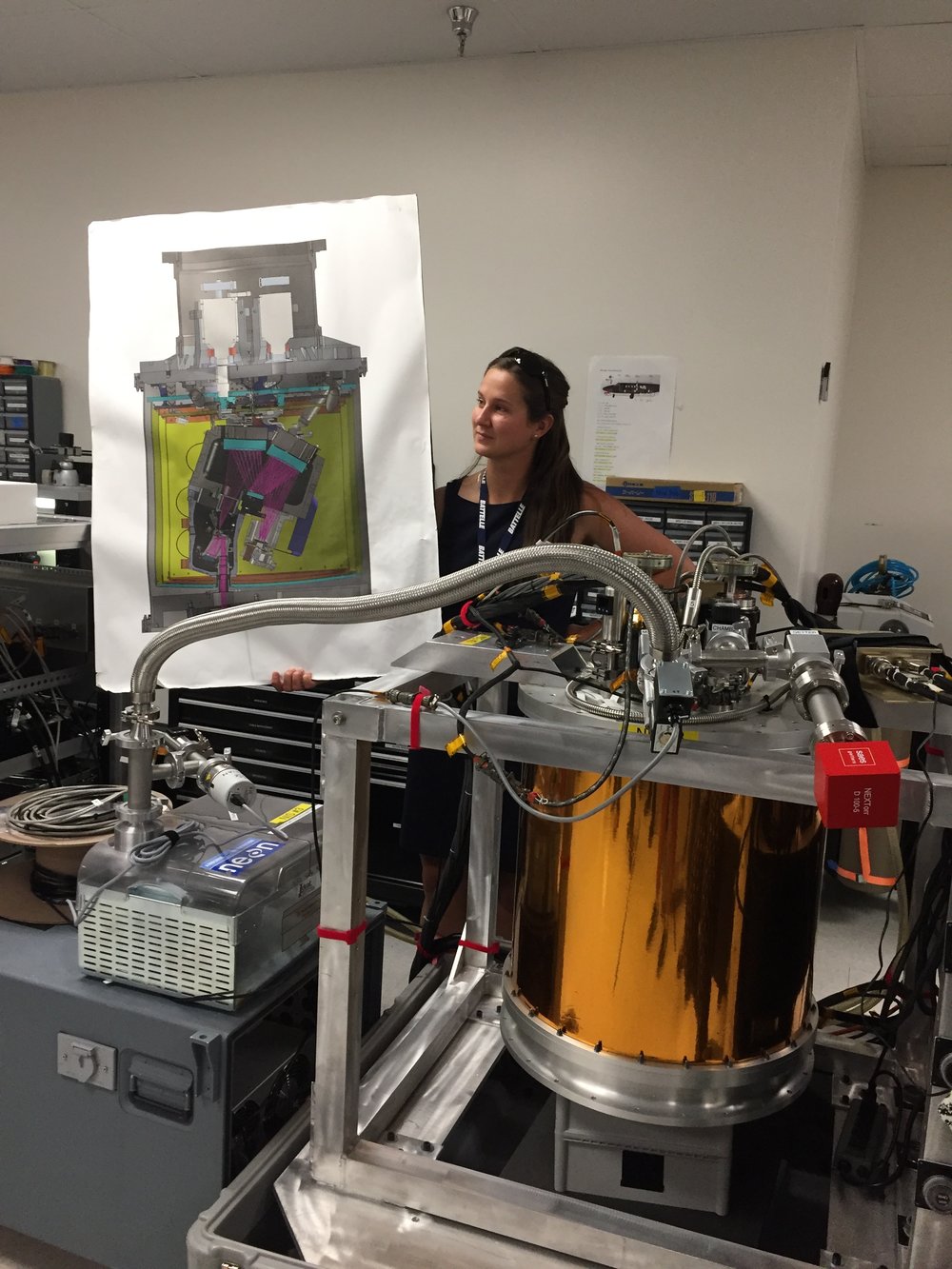

Attendees (about 30) included graduate students, old dogs (new tricks!) like me, and research scientists interested in developing reproducible workflows into their work. We are a mix of ages and genders. The morning session focused on learning about the NEON program (http://www.neonscience.org/): its purpose, sites, sensors, data, and protocols. NEON, funded by NSF and managed by Battelle, was conceived in 2004 and will go online for a 30-year mission providing free and open data on the drivers of and responses to ecological change starting in Jan 2018. NEON data comes from IS (instrumented systems), OS (observation systems), and RS (remote sensing). We focused on the Airborne Observation Platform (AOP) which uses 2, soon to be 3 aircraft, each with a payload of a hyperspectral sensor (from JPL, 426, 5nm bands (380-2510 nm), 1 mRad IFOV, 1 m res at 1000m AGL) and lidar (Optech and soon to be Riegl, discrete and waveform) sensors and a RGB camera (PhaseOne D8900). These sensors produce co-registered raw data, are processed at NEON headquarters into various levels of data products. Flights are planned to cover each NEON site once, timed to capture 90% or higher peak greenness, which is pretty complicated when distance and weather are taken into account. Pilots and techs are on the road and in the air from March through October collecting these data. Data is processed at headquarters.

In the afternoon session, we got through a fairly immersive dunk into Jupyter notebooks for exploring hyperspectral imagery in HDF5 format. We did exploration, band stacking, widgets, and vegetation indices. We closed with a fast discussion about TGF (The Git Flow): the way to store, share, control versions of your data and code to ensure reproducibility. We forked, cloned, committed, pushed, and pulled. Not much more to write about, but the whole day was awesome!

Fun additional take-home messages:

- NEON is amazing. I should build some class labs around NEON data, and NEON classroom training materials are available: http://www.neonscience.org/resources/data-tutorials

- Making participants do organized homework is necessary for complicated workshop content: http://neondataskills.org/workshop-event/NEON-Data-Insitute-2017

- HDF5 as an possible alternative data format for Lidar - holding both discrete and waveform

- NEON imagery data is FEDExed daily to headquarters after collected

- I am a crap python coder

- #whofallsbehindstaysbehind

- Tabs are my friend

Thanks to everyone today, including: Megan Jones (Main leader), Nathan Leisso (AOP), Bill Gallery (RGB camera), Ted Haberman (HDF5 format), David Hulslander (AOP), Claire Lunch (Data), Cove Sturtevant (Towers), Tristan Goulden (Hyperspectral), Bridget Hass (HDF5), Paul Gader, Naupaka Zimmerman (GitHub flow).

- Author: Andy Lyons

- Author: Sean Hogan

Last week we enjoyed attending the CalGIS 2017 conference in Oakland. This year the meeting was co-hosted with LocationCon, so it was larger than usual and had a good mix of participants from government, non-profits, academic and consulting companies. As expected there were a lot of people from California, but we also met a lot of people from other parts of the US.

The first day of the conference was devoted to workshops, and IGIS gave a half-day version of our workshop on drone technology and data analysis. This was well attended, and one of several sessions focused on drone technology. For us, this was also more preparation for our upcoming Dronecamp at the end of July.

The following day, Andy gave a presentation on some of the issues for scaling up drone capacity within a specific institutional setting like ANR. We discussed some of the issues we've been dealing with, including matching the scale of the data to the scale of science and management questions, outreach and training, regulatory compliance, and tailoring off-the-shelf technology for specific applications and contexts. The Q&A period highlighted a number of common challenges facing many organizations striving to take advantage of drone technology. One of the most common needs is developing institutional level policies to ensure safety and compliance with a dynamic array of federal, state, and local regulations. This discussion reminded us how fortunate ANR is to be backed by the UC Center of Excellence on Unmanned Aircraft System Safety, because many local agencies and public utilities are still trying to figure it out.

Another common theme that came up was management of the massive amount of data that drones can collect, and how to share and find drone data. Managing drone data is challenging because of the sheer volume of data. This makes many traditional strategies platforms unworkable and even cloud based solutions difficult to use because of long transfer times. Like many programs, we started managing drone data by adapting existing tools and established practices from other fields like GIS and remote sensing, which we have been refining as we learn more and as our drone service program grows to include more people and projects. We recently started documenting our data management system in a recent Tech Note (more about that in an upcoming blog), and are currently exploring a new online platform for dissemination in collaboration with ESRI (stay tuned for more info about that also). What became clear at the conference however is that the tools and platforms for drone data management are still catching up, and we have a long way to go before we can reach the capabilities of portals for more traditional GIS data, such as the State of CA Geoportal or even the National Map.

Other highlights from the conference were the many excellent talks, including presentations on using drones to create a very precise digital elevation model of a wetland restoration site, techniques for machine learning classification of aerial imagery, and the US Forest Service's system-wide database called EDW. We also heard about some of the exciting new features of Cal-Adapt, including an API that will dramatically simplify the process of creating decision support tools and other applications that require downscaled climate forecasts. Many of the presentations are available through the conference website, all of which are well worth checking out.

Today we had our 1st Data Science for the 21st Century Program Conference. Some cool things that I learned:

- Cathryn Carson updated us on the status of the Data Science program on campus - we are teaching 1200 freshman data science right now. Amazing. And a new Dean is coming.

- Phil Stark on the danger of being at the bleeding edge of computation - if you put all your computational power into your model, you have nothing left to evaluate uncertainty in your model. Let science guide data science.

- David Ackerly believes in social networking!

- Cheryl Schwab gave us an summary of her evaluation work. The program outcomes that we are looking for in the program are: Concepts, communication, interdisciplinary research

- Trevor Houser from the Rhodian Group http://rhg.com/people/trevor-houser gave a very interesting and slightly optimistic view of climate change.

- Break out groups, led by faculty:

- (Boettiger) Data Science Grand Challenges: inference vs prediction; dealing with assumptions; quantifying uncertainty; reproducibility, communication, and collaboration; keeping science in data science; and keeping scientists in data science.

- (Hsiang) Civilization collapses through history:

- (Ackerly) Discussion on climate change and land use. 50% of the earth are either crops or rangelands; and there is a fundamental tradeoff between land for food and wildlands. How do we deal with the externalities of our love of open space (e.g. forcing housing into the central valley).

- Finally, we wrapped up with presentations from our wonderful 1st cohort of DS421 students and their mini-graduation ceremony.

- Plus WHAT A GREAT DAY! Berkeley was splendid today in the sun.

Day 3: I opened the day with a lovely swim with Elizabeth Havice (in the largest pool in New England? Boston? The Sheraton?) and then embarked on a multi-mile walk around the fair city of Boston. The sun was out and the wind was up, showing the historical buildings and waterfront to great advantage. The 10-year old Institute of Contemporary Art was showing in a constrained space, but it did host an incredibly moving video installation from Steve McQueen (Director of 12 Years a Slave) called “Ashes” about the life and death of a young fisherman in Grenada.

My final AAG attendance involved two plenaries hosted by the Remote Sensing Specialty Group and the GIS Specialty Group, who in their wisdom, decided to host plenaries by two absolute legends in our field – Art Getis and John Jensen – at the same time. #battleofthetitans. #gisvsremotesensing. So, I tried to get what I could from both talks. I started with the Waldo Tobler Lecture given by Art Getis: The Big Data Trap: GIS and Spatial Analysis. Compelling title! His perspective as a spatial statistician on the big data phenomena is a useful one. He talks about how data are growing fast: Every minute – 98K tweets; 700K FB updates; 700K Google searches; 168+M emails sent; 1,820 TB of data created. Big data is growing in spatial work; new analytical tools are being developed, data sets are generated, and repositories are growing and becoming more numerous. But, there is a trap. And here is it. The trap of Big Data:

10 Erroneous assumptions to be wary of:

- More data are better

- Correlation = causation

- Gotta get on the bandwagon

- I have an impeccable source

- I have really good software

- I am good a creating clever illustrations

- I have taken requisite spatial data analysis courses

- It’s the scientific future

- Accessibly makes it ethical

- There is no need to sample

He then asked: what is the role of spatial scientists in the big data revolution? He says our role is to find relationships in a spatial setting; to develop technologies or methods; to create models and use simulation experiments; to develop hypotheses; to develop visualizations and to connect theory to process.

The summary from his talk is this: Start with a question; Differentiate excitement from usefulness; Appropriate scale is mandatory; and Remember more may or may not be better.

When Dr Getis finished I made a quick run down the hall to hear the end of the living legend John Jensen’s talk on drones. This man literally wrote the book on remote sensing, and he is the consummate teacher – always eager to teach and extend his excitement to a crowded room of learners. His talk was entitled Personal and Commercial Unmanned Aerial Systems (UAS) Remote Sensing and their Significance for Geographic Research. He presented a practicum about UAV hardware, software, cameras, applications, and regulations. His excitement about the subject was obvious, and at parts of his talk he did a call and response with the crowd. I came in as he was beginning his discussion on cameras, and he also discussed practical experience with flight planning, data capture, and highlighted the importance of obstacle avoidance and videography in the future. Interestingly, he has added movement to his “elements of image interpretation”. Neat. He says drones are going to be routinely part of everyday geographic field research.

What a great conference, and I feel honored to have been part of it.

Day 1: Wednesday I focused on the organized sessions on uncertainty and context in geographical data and analysis. I’ve found AAGs to be more rewarding if you focus on a theme, rather than jump from session to session. But less steps on the iWatch of course. There are nearly 30 (!) sessions of speakers who were presenting on these topics throughout the conference.

An excellent plenary session on New Developments and Perspectives on Context and Uncertainty started us off, with Mei Po Kwan and Michael Goodchild providing overviews. We need to create reliable geographical knowledge in the face of the challenges brought up by uncertainty and context, for example: people and animals move through space, phenomena are multi-scaled in space and time, data is heterogeneous, making our creation of knowledge difficult. There were sessions focusing on sampling, modeling, & patterns, on remote sensing (mine), on planning and sea level rise, on health research, on urban context and mobility, and on big data, data context, data fusion, and visualization of uncertainty. What a day! All of this is necessarily interdisciplinary. Here are some quick insights from the keynotes.

Mei Po Kwan focused on uncertainty and context in space and time:

- We all know about the MAUP concept, what about the parallel with time? The MTUP: modifiable temporal unit problem.

- Time is very complex. There are many aspects of time: momentary, time-lagged response, episodic, duration, cumulative exposure

- How do we aggregate, segment and bound spatial-temporal data in order to understand process?

- The basic message is that you must really understand uncertainty: Neighborhood effects can be overestimated if you don’t include uncertainty.

As expected, Michael Goodchild gave a master class in context and uncertainty. No one else can deliver such complex material so clearly, with a mix of theory and common sense. Inspiring. Anyway, he talked about:

- Data are a source of context:

- Vertical context – other things that are known about a location, that might predict what happens and help us understand the location;

- Horizontal context – things about neighborhoods that might help us understand what is going on.

- B oth of these aspects have associated uncertainties, which complicate analyses.

- Why is geospatial data uncertain?

- Location measurement is uncertain

- Any integration of location is also uncertain

- Observations are non-replicable

- Loss of spatial detail

- Conceptual uncertainty

- This is the paradox. We have abundant sources of spatial data, they are potentially useful. Yet all of them are subject to myriad types of uncertainty. And, the conceptual definition of context is fraught with uncertainty.

- He then talked about some tools for dealing with uncertainty, such as areal interpolation, and spatial convolution.

- He finished with some research directions, including focusing on behavior and pattern, better ways of addressing confidentiality, and development of a better suite of tools that include uncertainty?

My session went well – I got a great question from Mark Fonstad about the real independence of errors – as in canopy height and canopy base height are likely correlated, so aren’t their errors? Why do you treat them as independent? Which kind of blew my tiny mind, but Qinghua stepped in with some helpful words about the difficulties of sampling from a joint probability distribution in Monte Carlo simulations, etc.

Plus we had some great times with Jacob, Leo, Yanjun and the Green Valley International crew who were showcasing their series of Lidar instruments and software. Good times for all!