- Author: Genoa Starrs

R and Python together at last, the AI takeover, and Quarto ups its game.

Posit::Conf, Posit's annual conference, was held last week in Seattle, WA. While the name might be unfamiliar, many of you might recognize Posit's previous iteration, RStudio. Posit has produced many products R users know and love, including RStudio, Shiny and the Tidyverse. After a whirlwind three days, here are some takeaways from the conference!

A New All-in-One IDE

Togetherness was a key theme, with Posit rolling out the beta version of Positron, an IDE that combines elements of RStudio, VS Code, and introduces its own unique features. While switching IDEs is generally not at the top of any coder's to-do list, Positron is multilingual, enabling users to code in R, Python, and Julia within a single project. It also introduces new ways to interact with your data, such as readily available summary statistics, the ability to filter or sort data by multiple fields while retaining the active query in the window at all times, and the option to resize graphs and figures in the plots pane through a simple user interface. Positron also leverages many new and existing VS Code extensions, offering a wealth of customization and additional capabilities. Rstudio is by no means leaving the picture– Positron is still in beta, and Rstudio will continue to be supported for a good, long time. However, if you (like me) crave a unified interface for your R and Python coding, Positron may be worth trying out.

Helping R Users Learn Python

For Py-curious R coders, a session on “Python Rgonomics” suggested some packages to make the transition easier for those of us spoiled by the tidyverse.

-

Polars for exceptionally fast data wrangling and dplyr-like syntax

-

Plotnine and seaborn for ggplot-like syntax when making graphs and figures

-

Great tables for producing functional, readable tables (also available in R as the gt package).

-

Pyenv for environment management.

-

Pins (for R and Python!) publishes objects to “boards” that allows users (or multiple users) to access them across projects. Boards can include shared/networked folders, like DropBox or Google Drive.

AI for All

Melissa Van Bussel provided practical tips for using generative AI. She highlighted some new capabilities of ChatGPT 4o, including the ability to transcribe handwritten notes and tables, preserving colors and formatting. GPT can even convert these into HTML or Quarto formats.

She shared insights on prompt engineering (i.e., how you ask questions to AI engines), noting, “Writing effective prompts goes hand in hand with your existing expertise.” Achieving correct and effective output requires providing specific prompts and making corrections when errors occur. She recommended structuring prompts in a way that mirrors coding practices. For example, to generate a graph, start by specifying the data, then define how to map each axis and assign colors. Next, specify the graph or chart type and indicate any grouping by other variables. Finish with aesthetic (eg palette, theme, title, legend) preferences.

While presenters were enthusiastic about the possibilities of generative AI, a recurring theme was the necessity for users to provide clear direction and verify the results. One presenter compared AI to hiring a new human assistant—AI can assist with tasks effectively when given proper guidance but will make mistakes and requires careful supervision. Generative AI performs best when used to quickly accomplish tasks that users already have the knowledge and skills to handle themselves.

One of the most prevalent use cases for generative AI was in combination with Shiny. Joe Cheng's presentation demonstrated integrating AI into Shiny apps, specifically into Shiny dashboards. Users could request modifications to the data displayed on the dashboard using plain language, which the AI translated into SQL queries to adjust the output based on the request. This is particularly noteworthy as the AI accessed only the schema, not the actual data, to apply the filters.

Winston Chang developed an AI assistant to help people build Shiny apps and did a live demonstration. Although still experimental, Shiny for R is widely used, and the assistant showed promising outputs.

Quarto Expands its Horizons

Quarto is a relatively new version of R Markdown (a publishing tool) that allows users to knit together code into documents, dashboards, web pages, PDFs, and even eBooks. An added advantage of Quarto is its multilingual capability– it, like Positron, supports both R and Python. Some new capabilities were highlighted at the conference:

-

Dashboards: Easily build dashboards using the Quarto extension in RStudio or Positron. Each visualization (graphs, maps, tables, and even just text boxes) can be arranged like tiles or cards. Dashboards can also include sidebars and toolbars, and can support interactivity, including cards that use jupyter widgets, leaflet, and shiny.

-

PDFs: Quarto now uses typst instead of LaTeX, enabling users to create customized PDF outputs with a more intuitive language.

-

HTML (Websites): Quarto (like R Markdown) can produce HTML outputs. However, now it also supports more flexible HTML code chunks, and allows for HTML/CSS/JavaScript integration.

-

Quarto live: A quarto extension that allows users to embed code blocks and exercises for R and Python into Quarto documents. This has lots of teaching applications, and can be used to generate exercises similar to those you find in DataCamp and other online coding courses.

-

Closeread: A scrollytelling extension for quarto that enables interactive storytelling similar to that seen in fancy New York Times articles or Esri Storymaps. The gallery has some example outputs, while the guide can walk you through the process of creating your own scrollytelling page.

Finally, one of my favorite quality-of-life take homes was simply that it is possible to include emoji in your R or Python code, either using unicode or simply pasting them in. While the demonstrated use case was to make specific messages stand out in your log or printed statements, sometimes a picture can convey what 1000 characters cannot and help you enjoy coding just a little bit more.

If you love (or begrudgingly engage in) data science, I encourage you to check it out next year– virtual registration for educators and academics in 2024 was free, and hopefully will be next year too!

- Author: Sean Hogan

- Contributor: Shane Feirer

- Contributor: Andy Lyons

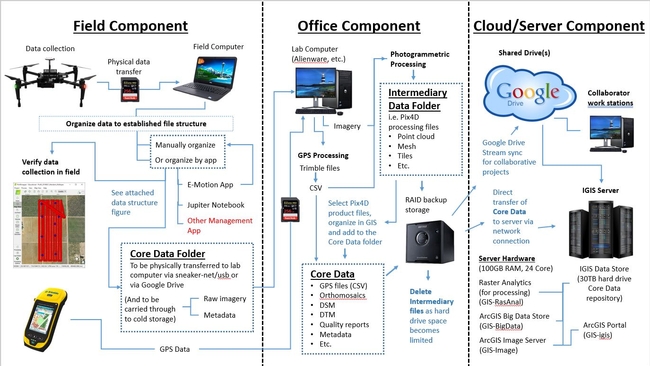

During this coronavirus lock down, IGIS has set out to revamp its data infrastructure to address our growing needs for big data storage and management moving forward. In particular, over the past few years we have accumulated over a dozen terabytes of drone data and associated mapping products, constituting tens of thousands of project files, and the quantity of this data is only expected to keep growing. Typically this drone data has been processed on a number of local desktop computers, and then backed up onto RAID hard drives or the cloud for cold storage; however, this is far from ideal in terms of consistent organization, versioning and ease of distribution.

As a solution to the problem, IGIS purchased a web server, equipped with multiple virtual machines (for processing, analysis and web services) along with a 30TB RAID data store/repository. The repository was networked to our various IGIS computers and RAID storage devices, so that all of our drone data could be transferred over to it. After much consideration, we settled on a standardized file structure, which could accommodate both datasets from past and future drone projects, with room for growth as needed. A python script was written to automatically generate this file structure, with some metadata inputs for each project, and our previous projects' data were then moved into their appropriate slots in the new structure, while jettisoning unwanted intermediary processing files; freeing up a ton of storage space. It would be correct to assume that this process of moving data was quite time consuming. However, moving forward, it will be easy to automatically set up our projects' file structures right from the inception of every new project, beginning with running the python script in ArcGIS Pro's Jupyter Notebook utility in the field, to eventually be delivered to the server repository down the pipeline, in a nicely organized package (similar to what we would provide to our non-IGIS project collaborators).

That alone is a big step in the right direction, but it gets better. Because all of this data is now in a standardized file structure, with standardized folder naming conventions, scripting our ArcGIS portal to automatically connect with the data via the imager server was only a small step away. With this complete, now any IGIS team member can access our entire post-processed, GIS-ready, drone data inventory of layers via ArcGIS Online or ArcGIS Pro.

Ultimately this has been a big leap forward, in terms of IGIS's informatics infrastructure; to compliment our significantly evolved pipeline for drone data collection and processing, depicted below.

- Author: Andy Lyons

A Unique Data Science Summit

Yesterday, several of us in the IGIS Program participated remotely in a very interesting summit on data science in agriculture. The summit was sponsored by the National Institute of Food and Agriculture (NIFA), which is the funding arm of the US Department of Agriculture (USDA). The goal of the summit was to hear examples of how data collection systems and analytics are playing a transformative role in agriculture, in order to help USDA develop an investment strategy for the next phase of their data science grant program. USDA has been funding innovative big data projects for some time, and will soon be rolling out a new initiative called FACT (Food and Agriculture Cyberinformatics and Tools Initiative).

It was exciting to hear the presentations about how rapid advancements in data collection systems, processing, and analytics are changing agriculture across the US and overseas. From sensor systems that support precision farming, to a new generation of genomics studies, to smarter production models and decision support systems, innovation is happening everywhere. The recorded presentations are online.

What Should USDA Fund?

NIFA is actively soliciting input from experts in the field about funding priorities, and have set up an online forum where people can provide feedback and vote for ideas. The forum is centered around six questions that were also discussed in breakout groups at yesterday's summit. The questions ask what are the most promising opportunities for:

- data-driven advances in agriculture and the food-production systems?

- enhancing cross-sector advances in data applications?

- data-driven advances to address societal well-being and consumer demands?

- to address challenges of various facets of data management and application?

- to ensure future generations of data expertise?

- big data in communications, property rights, and communities?

Data Science in ANR

ANR Farm Advisors and Specialists have been exploring similar questions for years. To name just a couple of examples, the Precision Agriculture workgroup has been developing methods to measure and manage for in-field variability. ANR has also sponsored several apps-for-ag hackathons, including one they hosted this past summer in collaboration with the State Fair. Here at IGIS, we teach workshops on geospatial data analysis, data management, and remote sensing with drones. We also maintain ANR's network of Flux towers, and have digitized historical records from ANR's network of Research and Extension Centers.

What do YOU think?

Many people think data analytics will be the engine for the next revolution in agriculture - what do you think the priority areas should be? NIFA is soliciting input through their Ideas Engine through the end of October. Take this unique opportunity to help shape the future of agricultural data science by letting your voice be heard!